Rayan Noronha

Data & AI Platform Engineer

I design and own production-grade data platforms end-to-end — event-driven pipelines, RAG systems, agentic AI workflows, and cloud-native infrastructure — across regulated industries including banking, healthcare, and government. I work without waiting for direction.

Built for regulated environments.

I build the data infrastructure that enterprises depend on. Scalable, resilient pipelines with full lineage, auditability, and compliance built in from day one — not bolted on after something breaks.

My background spans banking and financial services, healthcare, government, agriculture, and education. Working across regulated industries has shaped how I think about data engineering: correctness, recoverability, and auditability are the foundation, not features.

On the AI side, I build the infrastructure layer that makes intelligent systems production-ready — RAG pipelines with hybrid vector and keyword retrieval, agentic orchestration patterns, embedding pipelines, and the observability layer that tells you when something goes wrong at 2am.

I set engineering standards and own the full stack: containerised workloads on Docker and ECS, pipeline orchestration with Airflow, infrastructure as code with Terraform, CI/CD with GitHub Actions, and structured observability with Prometheus and Grafana. Everything I ship is documented — C4 diagrams, Architecture Decision Records, runbooks — because production systems need to be operable by someone other than the person who built them.

Sectors

Tech Stack

Built to principal-level standards. Not tutorials.

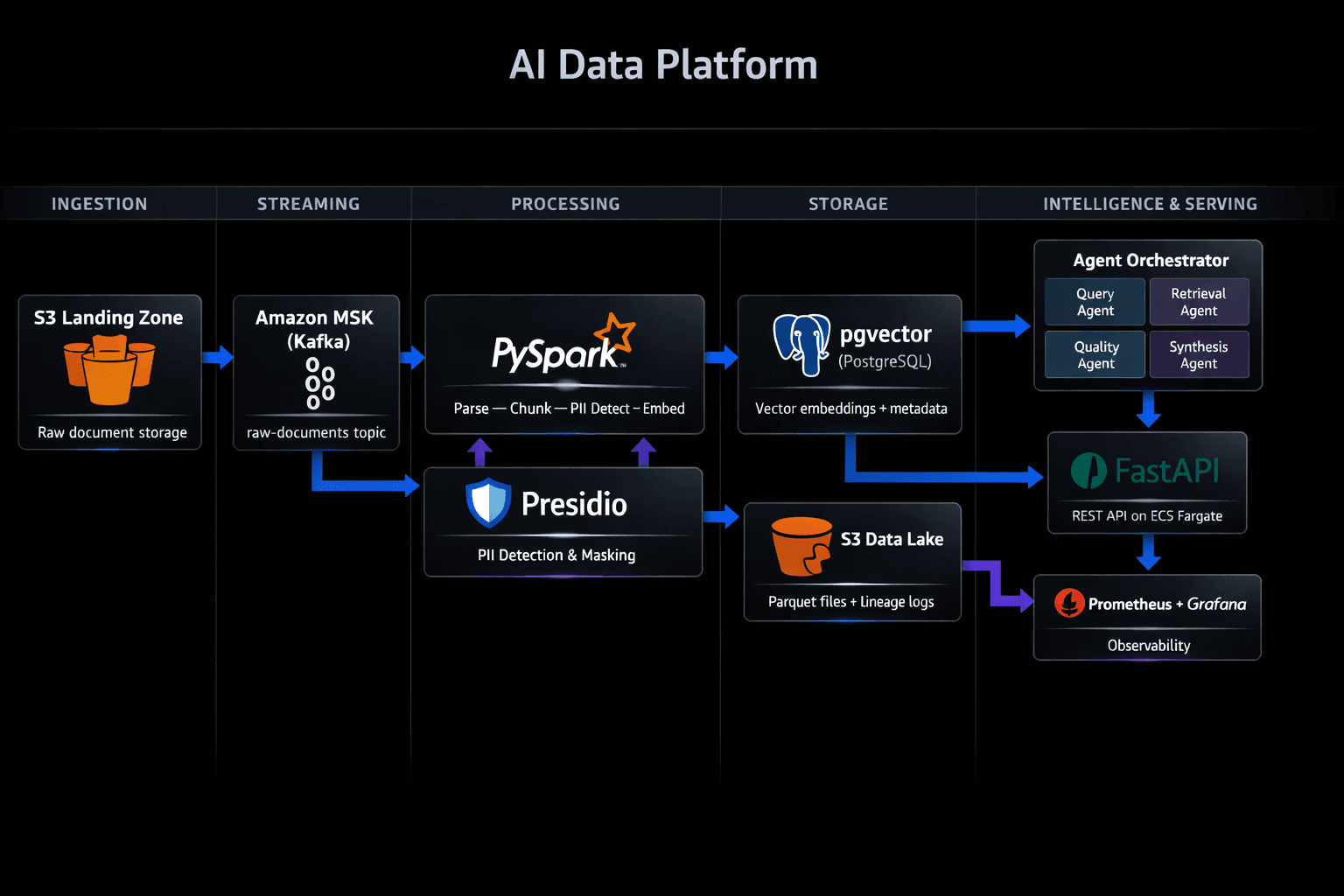

AI-Powered Data Pipeline with RAG & Agentic Workflows

LiveProduction RAG system built on event-driven infrastructure. Documents flow from S3 through Kafka into a processing pipeline that parses, chunks, detects and masks PII, generates embeddings, and stores them in pgvector. Four specialised AI agents — query understanding, hybrid retrieval, data quality validation, and answer synthesis — coordinate via an orchestrator to return cited, source-validated answers in under 200ms. Built to enterprise standards: containerised with Docker, CI/CD via GitHub Actions, Prometheus metrics, structured logging, health checks on all dependencies, and 11 Architecture Decision Records documenting every significant trade-off — including a cost optimisation decision that reduced vector database costs by 75%.

4 AI Agents in sequence

175ms end-to-end latency

11 Architecture Decision Records

Hybrid vector + keyword search

PII detection across 8 entity types

53 unit tests · CI green

Insurance Data Platform Modernisation

In ProgressLegacy SSIS/SQL Server migration to a modern Snowflake + dbt + Azure stack. Insurance domain modelling — claims processing, policy management, regulatory reporting — built for auditability and compliance from the ground up.

Real-Time Data Governance Engine

PlannedReal-time compliance and governance for regulated industries. CDC pipelines from transactional databases, automated PII classification at ingestion, data lineage tracking, and a policy engine that flags non-compliant data flows before they reach downstream consumers.